Integrations

Pryvet can be integrated into various systems to optimally support you with privacy-compliant AI power.

A single API for a variety of large language models

With Pryvet, you only need one interface to access a variety of state-of-the-art LLMs.

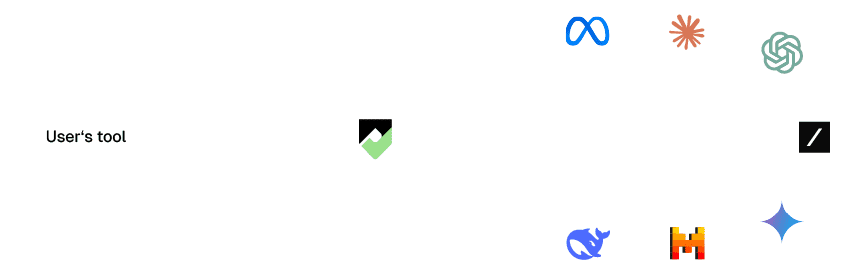

Secure Plug & Play LLM

Integrate Pryvet LLMs into your existing systems, tools, and processes as a Plug & Play replacement for other large language models. This allows you to use the familiar quality of your preferred LLMs combined with Pryvet’s security layers.

Pryvet’s secure interface offers a comparable technical feature set, endpoints, and parameters that you are already familiar with from integrating popular foundation models like those from OpenAI, Google, Mistral, Anthropic, and others.

-

Seamless Integration

-

Familiar API endpoints

-

Protection through security layers

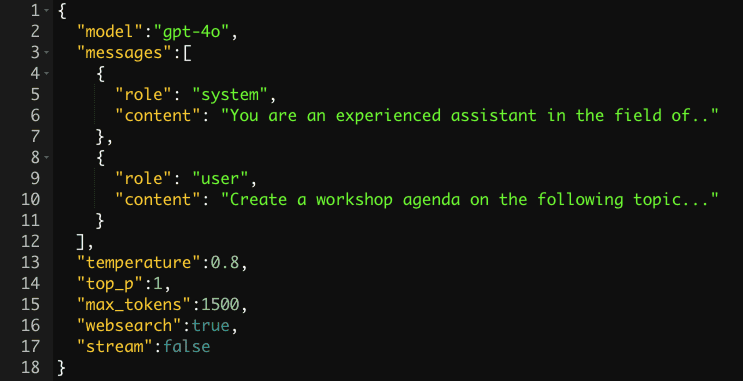

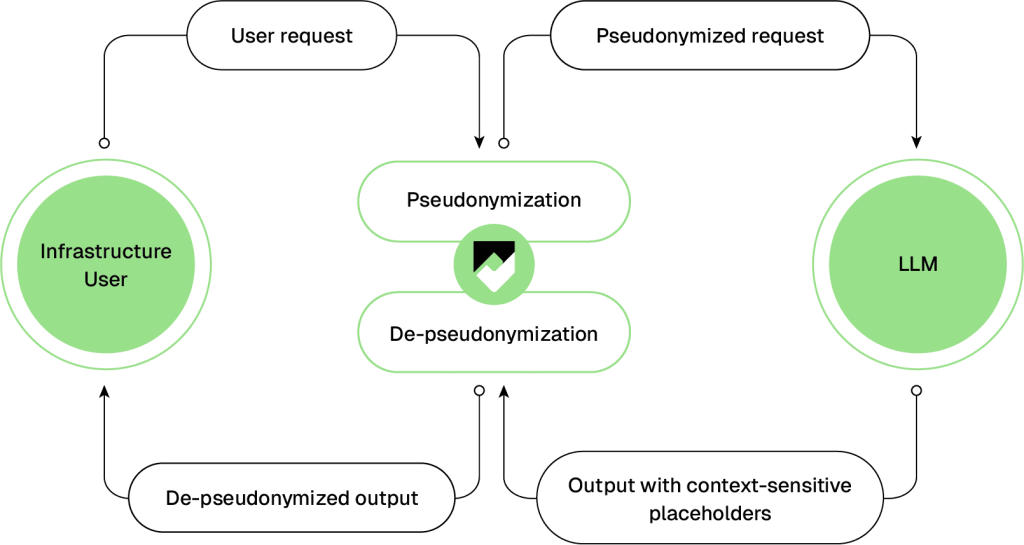

User request

A user request is made to the chatbot. This contains sensitive data.

Pseudonymization

The Pryvet Security Layer, placed between the user and the Large Language Model, detects the sensitive data and replaces it with context-sensitive placeholders.

Pseudonymized request

The request with the context-sensitive placeholders is forwarded to the selected Large Language Model.

Output with context-sensitive placeholders

The LLM generates its response including context-sensitive placeholders, which are sent to the Pryvet Security Layer.

De-pseudonymization

Within the Pryvet Security Layer, the context-sensitive placeholders are replaced with the original sensitive data.

De-pseudonymized output

The final response to the user query is sent to the user or the user's system with the original sensitive data.

PII-API

Use our detection of personally identifiable information and sensitive data for integration into your system landscape. This way, the detected entities can be further utilized and enriched within internal systems.

PII-API

Nutzen Sie unsere Erkennung von persönlich identifizierbaren Informationen und sensitiven Daten zur Einbindung in Ihre Systemlandschaft. Somit können diese erkannten Entitäten in internen Systemen weiter verwendet und angereichert werden.

The power of AI – secure and privacy-compliant

With Pryvet, you opt for a privacy-compliant solution that pseudonymizes your sensitive data and provides you with secure access to the most popular language models.